Project Director

- Ulrich Paquet

Project Manager & Workshop Lead

- Michael Alummoottil

NLP AI Expert

- Ayman Saeed

- Similoluwa Adetoyosi Okunowo

- Toky Iriana RAJAOFERA

- Maharavosoaniaina Mari-Mar RAKOTONIRAINY

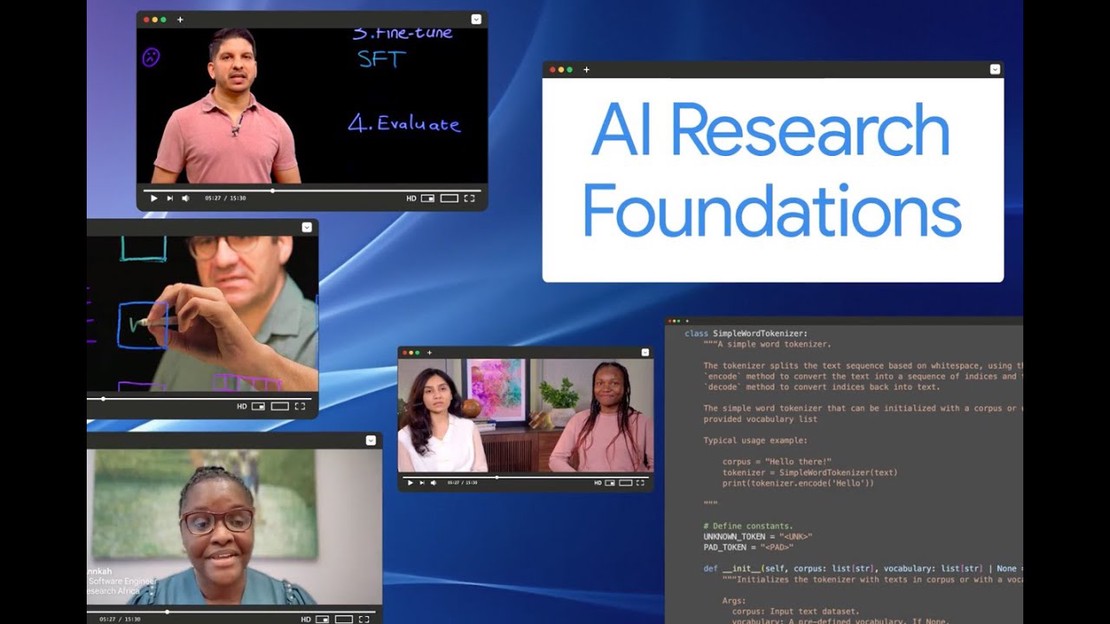

Leveraging the Google DeepMind AI Research Foundations course and a blended learning toolkit developed by pedagogy experts at UCL in collaboration with African science educators, the programme is designed to deliver scalable, sustainable, and locally relevant instruction in AI and Large Language Models across African universities. Supported by Google.org and implemented by FATE Foundation and AIMS South Africa.

Explore The CurriculumAfrica’s universities are producing the continent’s next generation of scientists, engineers, and innovators — but most lack the faculty expertise, up-to-date curriculum, and hands-on resources to teach advanced AI effectively. Students who want to build language models, train neural networks, or work on real AI research often have nowhere to turn locally.

AI Research Foundations changes that. Through a Train-the-Trainer model, we upskill your lecturers — turning them into certified “AI Champions” — so they can deliver a world-class, localized AI course to your students, at your university, in your context. The programme runs from 2026 to 2028, reaching 30 universities across Ghana, Kenya, Nigeria, and South Africa.

Learn More